In 1910, a machinist named Thomas Sullivan caught his sleeve in the gears of a textile loom in Lawrence, Massachusetts. The accident crushed his right hand so severely that doctors amputated three fingers. Within six weeks, Sullivan's family had lost their apartment, his wife had taken in washing to pay for food, and his children had dropped out of school to work in the same factory that maimed their father.

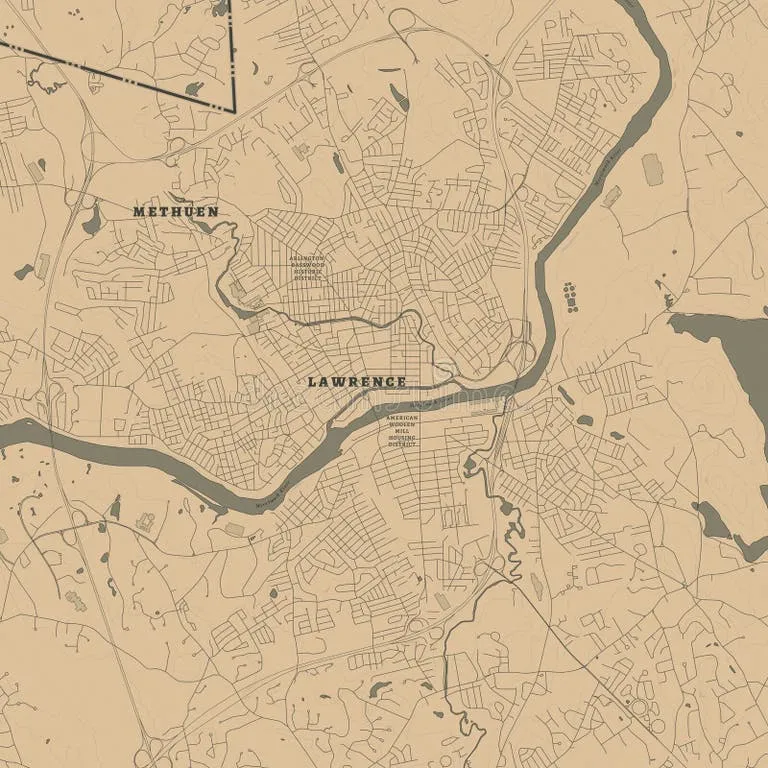

Photo: Lawrence, Massachusetts, via thumbs.dreamstime.com

Photo: Lawrence, Massachusetts, via thumbs.dreamstime.com

This wasn't an unusual story. It was Tuesday.

When Work Meant Walking Into a War Zone

A century ago, American workplaces killed and injured workers at rates that would shock modern sensibilities. In 1913, roughly 25,000 workers died on the job — equivalent to losing the entire population of a small city every year. Steel mills, mines, and factories operated like medieval battlefields where losing limbs was considered part of the job description.

Workers had no safety net beyond their own families and perhaps a church collection plate. No workers' compensation existed. No disability insurance. No OSHA inspectors prowling factory floors with clipboards and citations. When you got hurt, you faced a simple equation: heal fast or starve slowly.

The Triangle Shirtwaist Factory fire of 1911 crystallized America's workplace safety crisis. When 146 garment workers — mostly young immigrant women — died because management had locked the exit doors, the public finally understood that workplace injuries weren't just personal tragedies. They were systemic failures that demanded systematic solutions.

The Great Safety Awakening

The transformation didn't happen overnight. Wisconsin passed the first workers' compensation law in 1911, but it took decades for the concept to spread nationwide. Early safety regulations focused on the most obviously dangerous jobs — mining, steel production, and chemical manufacturing — where death rates resembled those of active combat zones.

By the 1950s, American workplaces had begun their metamorphosis. Safety engineers emerged as a new profession. Companies discovered that preventing injuries cost less than paying for them. Union contracts started including safety provisions alongside wage demands. The workplace death rate dropped from 61 per 100,000 workers in 1913 to fewer than 20 by 1960.

But the real revolution came in 1970 with the creation of the Occupational Safety and Health Administration. OSHA didn't just suggest workplace improvements — it mandated them, with federal inspectors who could shut down operations and levy fines that got management's attention.

From Catastrophe to Paperwork

Today's workplace injury represents a completely different universe from Thomas Sullivan's mangled hand. Modern workers who get hurt face a choreographed response system that would seem like science fiction to their great-grandparents.

When a contemporary warehouse worker strains their back lifting a package, they trigger an immediate chain reaction: incident reporting, immediate medical evaluation, ergonomic assessment of their workstation, modified duty assignments, and a return-to-work coordinator who monitors their recovery. What once meant potential financial ruin now means filling out forms and attending physical therapy sessions.

The statistics tell the story of this transformation. Workplace fatalities have dropped to fewer than 3.5 per 100,000 workers annually. Injuries that once ended careers now result in brief medical leaves and ergonomic keyboard adjustments. America's most dangerous traditional jobs — mining, construction, and manufacturing — now have safety protocols so comprehensive that workers spend more time in safety training than their predecessors spent in actual job training.

The New Invisible Injuries

Yet this remarkable progress has created its own blind spots. While we've largely conquered the dramatic injuries that once plagued American workers, we've simultaneously created new categories of workplace harm that our great-grandparents couldn't have imagined.

Repetitive stress injuries from computer work now affect millions of office workers. Workplace stress and burnout have reached epidemic proportions in jobs that previous generations would have considered cushy. Mental health issues linked to workplace conditions have exploded, but they don't show up in traditional injury statistics.

The gig economy has also created a new class of workers who fall outside traditional safety nets. Uber drivers, freelancers, and contract workers often lack the protections that full-time employees take for granted. They've traded the physical dangers of yesterday's workplace for the economic uncertainty that Thomas Sullivan would have recognized.

When Safety Became an Expectation

Perhaps the most remarkable change isn't in the statistics — it's in our expectations. Modern American workers assume they'll come home with the same number of fingers they started with. They expect their employers to provide safe working conditions, not as a favor, but as a basic requirement.

This assumption would have seemed absurdly optimistic to workers in 1910, when industrial accidents were considered as inevitable as weather. The idea that the government would send inspectors to ensure workplace safety, or that companies would spend millions on ergonomic assessments, would have struck them as fantasy.

Yet here we are, in a world where a workplace injury is more likely to result in a workers' compensation claim than a family's financial destruction. It's a transformation so complete that we've forgotten how remarkable it is — until we remember that just four generations ago, going to work meant accepting that you might not come home whole.

Thomas Sullivan's great-grandson probably works in an office, complains about his ergonomic chair, and has never considered that his daily commute to work is statistically more dangerous than his actual job. That's not just progress — that's a revolution so successful we've forgotten it happened.